Serverless and data stack (2) -- toward building a 'serverless' OLTP engine

On reading the SIGMOD'17 paper from the AWS Aurora team

In one of our previous posts, we talked about that a group of academic thought leaders and industry practitioners in UC Berkeley had already assured us that ‘serverless’ paradigm in cloud will prevail and capture cloud market growth opportunity in their academic paper.

Since we briefly discussed the serverless paradigm for data warehouse (OLAP engine) using a Snowflake academic paper in our last post, I felt it would be interesting to share my reading of an academic paper from AWS Aurora team: “Amazon Aurora: Design Considerations for High Throughput Cloud-Native Relational Databases” (SIGMOD’ 17).

Before reading the paper…

1. We will focus only on AWS RDBMS offerings

Since we are talking about the paper regarding building the Aurora system (a cloud RDBMS system) from AWS, we only focus on the relational data management system (RDBMS) offering — therefore, we don’t talk about other categories of databases such as AWS DynamoDB, MongoDB on AWS.

We will talk about the options we can make to get database solutions on top of AWS: options not involving Aurora and options with Aurora.

2. Database as a Services (DBaaS) from AWS other than Aurora

Before the RDS era — not a DBaaS: technically speaking, it’s your in-house DBA working on virtual machines — your in house expertise takes care every single bit of the DBA hands-on efforts; you really just skipped the hardware purchasing and OS installation part of your work;

(Transitional) RDS* 👇: with this option, you probably do not need a full time DBA any more. All you need is to know some DBA concepts such as failover, backup, snapshot, capacity planning… and configure your RDS instances according to your specific requirements;

Note: *though Aurora is an entirely different database engine under the hood, AWS offers it as one option of RDS on the AWS user console.

3. AWS Aurora

Aurora (AWS puts it under RDS console)* 👆: with this option, users don’t need to plan storage space and IOPS capacity planning any more, Aurora autoscale them on their behalf — the SIGMOD’ 17 paper discussed the internal system of this product.

One benefit of this architecture is it eliminates the need to pre-provision storage for future growth, and it eliminates the need to provision storage to ensure there will be enough IOPS for peak workloads. Instead, Aurora Storage automatically grows, automatically redistributes data based on hotspots, and automatically fixes failed components, all without any intervention needed by the end user or DBA.

Aurora Serverless: this is a further progress from Aurora. With this product, users don’t need to manage database engine nodes anymore. Operationally, this database offering becomes true “serverless” — this is one step forward of the serverful Aurora (the SIGMOD’17 paper did not discuss this step forward, but we will briefly cover it later)

Key innovations revealed from the paper

If you are interested in why cloud vendors can provide data stack services in a ‘serverless’ way, please read this section; otherwise, if you just want to become the users of those stacks, please skip this section and go to the user’s takeaway section.

1. Solving the hard problem for OLTP systems: building a multi-tenant scale-out storage service layer

One of the key take away of this paper is to decouple compute from storage, and the storage layer needs to be a multi-teancy system

(in Section 2.2: Segmented Storage) A storage volume is a concatenated set of PGs, physically implemented using a large fleet of storage nodes that are provisioned as virtual hosts with attached SSDs using Amazon Elastic Compute Cloud (EC2).

In short, the storage layer by itself is still built on top of regular compute node (which means its cost is still expensive if nothing else is done)

(in Section 5: Putting It All Together) …The storage service is deployed on a cluster of EC2 VMs that are provisioned across at least 3 AZs in each region and is collectively responsible for provisioning multiple customer storage volumes…

Though storage layer uses EC2 instances, the storage layer is a shared pool model (which makes the cost amortized / shared across the tenants).

2. Design the database logs smartly to preserve the transactions yet optimize performance in a distributed environment

There are other innovations from the paper to make Aurora an innovative product, and we briefly list them here for those who are interested in database or distributed system internals:

Logs and optimization of log operations across different components e.g.,

which operations must be synchronous and which operations can be asynchronous;

how to reduce the number of commits across the network.

Please read (Section 3) The log is the database, and (Section 4) The Log Marches Forward

Beyond the paper: after the storage problem is solved, the engine layer can go ‘serverless’ as well

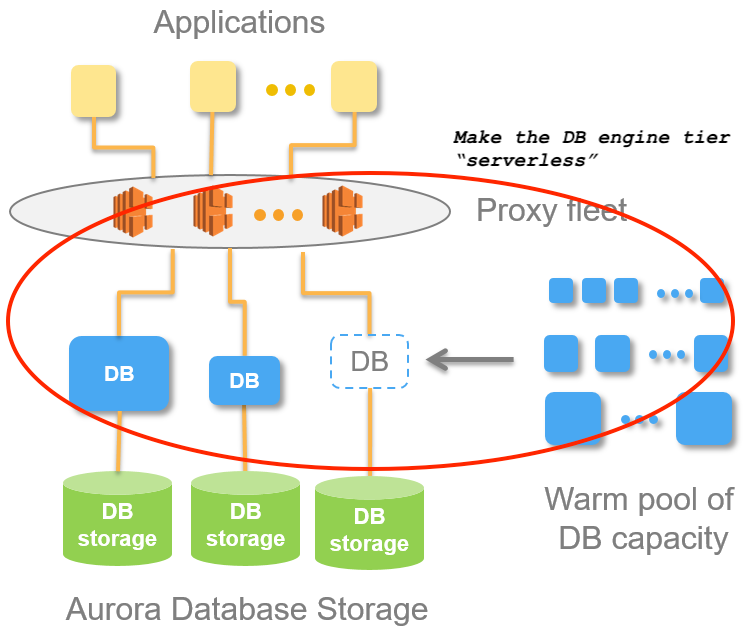

This SIGMOD’17 paper shared with us how they solved the hard problem of providing database tier with a shared resource — solving the storage tier problems. But it did not talk about how the database engine tier could be provisioned in a “serverless” manner.

To understand how AWS is working on it, please read their technical blog — I would not be surprised that they will submit another academic paper later to the SIGMOD conference 🤓. The following diagram showed how the DB engine can be provisioned in an engine node pool mode.

(BTW, I started experimenting to add a “buy me a coffee” button in the articles to see how much interest each topic may gather)

If you read so far here, and feel it’s an interesting topic, please either hit the like button or buy me a coffee 😉.

Users Takeaway

While we use function as a service (FaaS), e.g., AWS Lambda, from cloud vendors and think that solving the compute tier problem is probably easy (yes, because the nature of stateless), the serverless paradigm in database tier is already around the corner.

Therefore, even though your organization may have not embarked the serverless journey yet, or maybe even your org has not fully transitioned to cloud yet, as an engineer who thinks about building product cost and time efficiently, you may want to pay attention to this new trend in cloud infrastructure.

Other than sharing the paper and under-the-hood mechanism of the AWS Aurora system, another goal of this post is to give readers some technical assurance that serverless paradigm is not a fad, and the cloud vendors are cracking the hard problem one-by-one.

Summary:

The academic paper describing how AWS Aurora is built under the hood sheds light on how to build a cloud native database system.

Though the paper did not touch the last mile of a serverless Aurora, it has already revealed the solution of the hard problem (i.e., how to use a pool model storage backend) to build such a system.

Please feel free to share your thoughts on this data stack and serverless paradigm shifting discussion.